- +1 (714) 578-6100

Hours Mon - Fri, 07:00 AM - 06:00 PM (Pacific Time)

A vacuum chamber is a sealed container that creates a localized, low-pressure environment. Vacuum chambers and vacuum gloveboxes are used in a variety of applications including scientific research, manufacturing, product development, performance testing, and simulation environments. Vacuum chamber applications include, but are not limited to, leak testing, stress testing, semiconductor failure analysis, degassing, drying, distillation, permeability testing, coating, specific gravity determination, atmospheric simulations, and inert gas storage.

Fungi proliferate in dark, damp, and warm environments which also serve as ideal environments for opportunistic pests, mold, bacteria, and plant-based viruses. Agar-based techniques are highly susceptible to contamination. Fungal contamination is difficult to reverse and quickly spreads throughout the local environments.

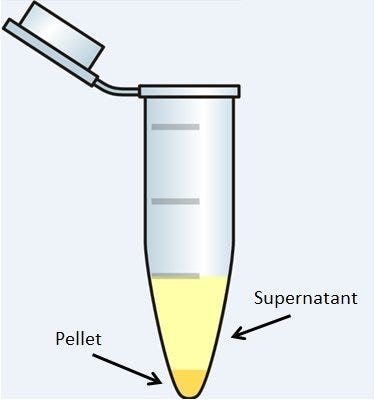

Centrifugation is one of the most widely used laboratory techniques for the separation of materials in the fields of biochemistry, molecular biology, medicine, food sciences and industry. It’s all about gravity and mass: particles in a heterogeneous solution will, given enough time, separate based on their size and density. Smaller, less-dense particles may also migrate down, but not always; some particles will never settle, but remain suspended in solution. Centrifuges force this process along much more quickly and efficiently. Its uses have proven to be so powerful and wide-spread across the sciences that centrifuges have been a common piece of laboratory equipment since the late 19th century.

Water’s ability to dissolve compounds, along with its polarity, bonding, melting, boiling and freezing points, heat absorption, and vaporization characteristics arguably make it the most versatile substance we know. It’s also ubiquitous and plentiful: the earth can’t live without it, most plants and animals can’t exist without it, and scientists can’t operate labs without it.

Water is the most common reagent used in the laboratory, and while water quality can often be overlooked, the grade of water being used in an application is critical. Minute traces of salts or biological contaminants can result in unfortunate consequences when culturing cells or performing analytical measurements of biological macromolecules.

Researchers have been culturing bacterial and eukaryotic cells for decades in an effort to elucidate their biological functions and to develop and evaluate treatments for disease. While culturing cells under atmospheric conditions may yield informative results, often these studies require an environment that more closely mimics the actual physiological climate.

In vivo, animal cells are exposed to oxygen concentrations that range from 1% to 12%. At normal atmospheric conditions, oxygen is present at a concentration of around 21%. Many anaerobic microorganisms cannot carry out proper metabolic processes in the presence of oxygen. In fact, atmospheric concentrations of oxygen are often toxic to these cells.

To confront these challenges, researchers have developed specialized chambers to encourage the growth of both bacterial and eukaryotic cells in an effort to investigate their physiological functions and develop treatments for diseases. Read more about

Right on the heels of a blog published on Laboratory-Equipment.com called “How to Improve Pipetting Techniques,” we present this discussion about calibration of gravimetric pipettes. Most labs are required to complete periodic calibration of instruments, as per documented protocols. Whether the tasks are performed by lab staff or a vendor, it’s a time-consuming and exacting process. The alternative? Data and test results that may be questioned or challenged.

Considerations for Calibration

Pipette calibration is performed by laboratories to ensure that their pipettes are functioning within given (documented or established) parameters. In fact, pipettes that are not routinely calibrated can produce vastly different dispensing volumes than expected, which results in ambiguous or even erroneous data sets.

Pipetting is one of the most common functions performed in labs. It is both a measuring technique and the conveyance used for transporting small volumes of fluid. Operations can become rote, but it’s critical to follow best-practices—with such small sample volumes, even trivial mistakes influence results.

How Many Standard Deviations From the Mean? Preventing Statistical Anomalies in Data Sets

Whether you are collecting data for a Pre-Market Authorization submission to the FDA or acquiring publication-quality data to satisfy the most shrewd peer reviewer, statistical outliers can destroy the confidence value of a data set. Many sources of variables exist when handling microliter volumes of liquid for experimental analysis.

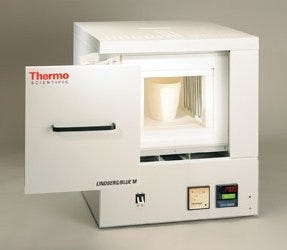

Furnaces have been part of laboratories for hundreds of years. Some of the first chemists to experiment with ultra-high temperatures were indeed brave, considering the flammability of many substances (in those days, perhaps known, or not…). Yet even today with our most modern processes and equipment, ultra-high temperature is still used in chemistry and manufacturing. Advances in furnaces have certainly been made over the years, improving efficiency and safety. As industry has grown and developed, we’ve also expanded the range of applications for furnaces.

Here, we look at some lab furnace types—how they work and how they are used.

Box and Muffle Furnaces

Box

Instrument options abound for preparing, incubating, washing, or chemical fixation of samples using kinetic motion. Lab mixers that include orbital shakers, vortexes, rockers, rollers and rotators are all offered in a variety of configurations (capacity, speed, duration, slope and more). These common lab instruments have been a necessary component of basic experimentation and sample preparation for decades; almost as long as chemists have understood the impact of motion.

The diversity of these mixing instruments is second only to the media and containers with which they are used. Thus, one must consider not only the procedure being performed, but also the nature of the samples used. Here we discuss this equipment and their most common applications to assist you in selecting the correct solution.

What are these instruments?

Orbital Shakers & Vortexers

Since its advent in 1995, miniaturized microarray analysis has become a mainstay analytical technique of genomic, proteomic, pharmaceutical and clinical laboratories worldwide. The initial microarray chips, developed by Pat Brown at Stanford University, utilized oligonucleic acid probes deposited on a substrate to discover the genomic composition of cells. After this initial focus, the technology rapidly expanded to utilize other, related probes (such as DNA, RNA, peptides, carbohydrates).

In addition to expanding the types of molecular probes, the technology also increased the types of substrates to which probes can be attached. Glass slides, plastic/silica chips, and silica or polystyrene beads can now be used as the medium for constructing a microarray.